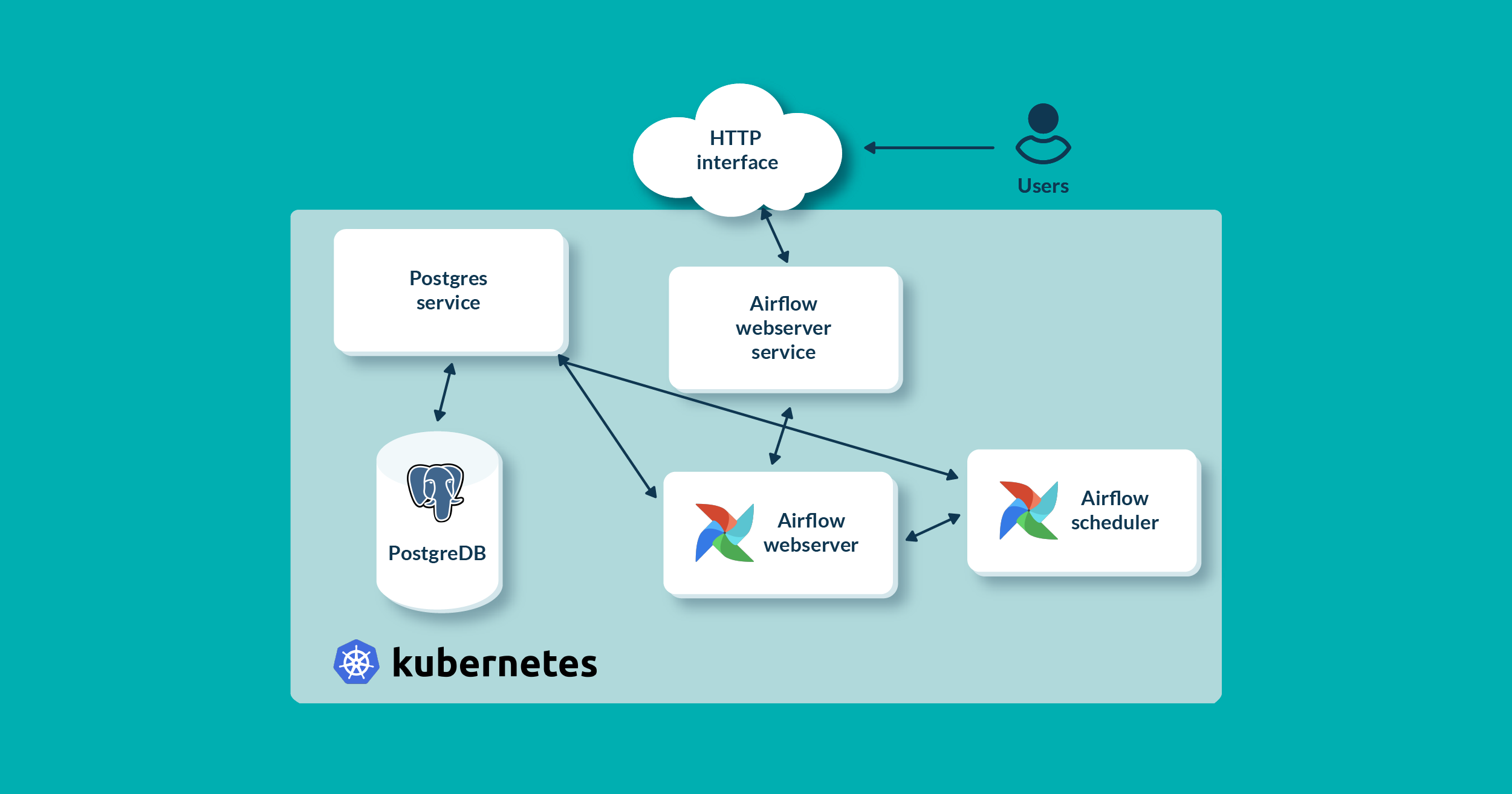

Amazon EKS on AWS Fargate: Running Amazon EKS on AWS Fargate gives you a fully managed data plane in which you are not responsible for creating and managing servers to run your pods.Amazon EKS data planeĪn EKS cluster consists of two primary components: the EKS control plane and EKS nodes that are registered with the control plane. Using Kubernetes constructs and EKS data plane options, you can ensure that critical applications run on On-Demand instances, while workers run on the cheaper compute instances provided by Spot. The core components like the web-UI are critical to users suppose the web-UI runs on a Spot instance, and that instance gets interrupted, users may loose their access to the dashboard, until Kubernetes recreates the Pod. Given Airflow architecture, we advise against running your entire cluster on Spot. In other words, there is a 5% chance that EC2 will reclaim your Spot instance before you intentionally terminate that instance. That may sound daunting at first, but in fact, the average frequency of Spot-initiated interruption across all AWS Regions and instance types is less than 5%. This may result in the termination of a Spot instance in your AWS account. What makes Spot instances different from On-Demand EC2 instances is that when EC2 needs capacity, it can reclaim capacity from Spot with a two minute notice. Spot helps you reduce your AWS bill with minimal changes to your infrastructure. Spot instances are spare EC2 capacity offered to you at a discounted price compared to On-Demand Instance prices. If you run these workers on Spot instances, you can reduce the cost of running your Airflow cluster by up to 90%. Whether your workflow is an ETL job, a media processing pipeline, or a machine learning workload, an Airflow worker runs it. In contrast, the UI and scheduler are lightweight processes (by default, they are allocated 1.5 vCPUs and 1.5 GB memory combined). Workers do the heavy lifting in an Airflow cluster. In a typical Airflow cluster, Airflow workers’ resource consumption outweighs that of Airflow core components by far. Second, the infrastructure that’s required to run Airflow workers, which execute workflows. First, the infrastructure needed to run Airflow’s core components such as its web-UI and scheduler. The infrastructure required to run Airflow can be put into two categories. This post shows you how you can operate a self-managed Airflow cluster using Amazon Elastic Kubernetes Service (EKS) and optimize it for cost using EC2 Spot Instances. Many AWS customers choose to run Airflow on containerized environments with tools such as Amazon EKS or Amazon ECS because they make it easier to manage and autoscale Airflow clusters. It is designed to be extensible, and it’s compatible with several services like Amazon Elastic Kubernetes Service (Amazon EKS), Amazon Elastic Container Service (Amazon ECS), and Amazon EC2. Apache Airflow is an open-source distributed workflow management platform for authoring, scheduling, and monitoring multi-stage workflows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed